The first and second laws of thermodynamics[1]

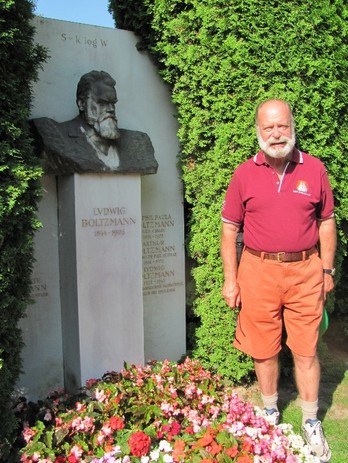

The author at Boltzmann’s grave at Zentralfriedhof cemetery, Vienna, 21 June 2013. Boltzmann’s equation appears above his head on the headstone.

The author at Boltzmann’s grave at Zentralfriedhof cemetery, Vienna, 21 June 2013. Boltzmann’s equation appears above his head on the headstone.

So much for the formation of the universe, its components and how the heavenly bodies came into being. It is now time to consider a few of the more important principles underlying the intricacies of this system and how it works, and the laws of thermodynamics are as good a place to start as any.

Thermodynamics is the study of heat and energy, and originated from efforts to understand and improve the steam engine in the early nineteenth century. In fact, the whole of the second law is framed in terms of the relationship between work, heat and heat loss evident in the functioning of such things as steam trains, steam ships, machinery and other engineering masterpieces permeating the industrial age. However, the principles involved also provide a deep insight into the manner in which the universe works as a whole. "In the briefest terms, the second law states that a little energy is always wasted. You can't have a perpetual motion device, because no matter how efficient, it will always lose energy and eventually run down. The first law says that you can't create energy and the third that you can't reduce temperatures to absolute zero: there will always be some residual warmth".[2] Only the first and second are considered here. The effects of all three laws in tandem have been pithily expressed in the aphorism “The energy of the world is constant; the entropy of the world strives to a maximum”[3].

According to the First Law of Thermodynamics (otherwise known as the Law of Energy Conservation) formulated by Rudolf Clausius (1822-1888), a unit of any one kind of energy can be changed into a unit of any other kind, without affecting the overall energy of the universe. This occurs in a so-called isolated or ideal system without any external exchange, in which neither matter nor energy can enter or exit but can only move around inside, and all processes are mechanical and reversible. It is a hypothetical system whose total energy-mass remains constant. According to this system, energy cannot be created or destroyed, only transformed from one variety to another. The principle reduces to the formula: ΔΕ universe = 0 where the net change (Δ) in the total energy of the universe (Ε universe) always equals zero, because the total energy of the universe is an eternal constant.

The Second Law of Thermodynamics, also known as the Law of Energy Conversion or Non-Conservation was also formulated in its initial form by Clausius. It is concerned with temperature and energy change and their irreversible propensities, heat always flowing from hot to cold, never vice-versa. It also involves the manner in which friction changes mechanical movement into heat, never the reverse. Clausius concluded that these were but two varieties of the same thing, a state of affairs which he described as entropy: the unusable energy in the form of heat which is lost in the production process[4].

The Second Law says that in physical terms, a system reaches its death when it reaches its maximum disorder when energy in the form of heat stops flowing (thermal death), or when it contains as much information as it can handle (information overload), and this state of maximum disorder is reached when life is deprived of its capacity to evolve so that it effectively becomes part of the lifeless universe[5]. These principles apply to all physical systems, including life itself, which must ultimately perish[6].

The principle involved is that all machines inevitably lose heat in the production process which is unusable. Take a steam engine. “The natural changes in such engines (heat escaping wastefully from hot boiler to cool radiator and work being changed wastefully into heat by friction) always exceed(s) the one and only unnatural change (heat being changed into work by pistons)”. So when the engine cools down, the heat energy still exists but it can no longer do any work since it has dissipated into the air. The result is a net increase in the entropy of the universe[7]. Similar principles apply to the heat changes wrought by other machines such as our own bodies and the life cycle of stars. So the positive and negative changes occurring in all real-life engines of the universe always combined so as to increase entropy – “Always!”[8]

In terms of the life cycle of the universe, considered on a global scale, this means that "everything is ultimately destined to destruction in the heat death of the universe. As the universe expands, it grows colder and colder as its energy is used up. Eventually all the stars will burn out, all matter will collapse into dead stars and black holes, there will be no life, no heat, no light—only the corpses of dead stars and galaxies expanding into endless darkness".[8.1]

Expressed mathematically, ΔS universe > 0, that is, the net change in the total entropy of the universe always exceeds zero, and the positive entropy (natural) changes always exceed the negative (unnatural) ones. Heat always flows from the hot areas to the cold areas, ultimately resulting in a lukewarm universe where heat does not flow at all until all temperatures ultimately become equal. . In the fullness of time, the universe will become a bland nothingness devoid of heat flow[9]. Life needs temperature differences, to avoid being stifled by its waste heat. So life will disappear.

In two papers published in 1872 and 1877, Ludwig Boltzmann (1844-1906) reduced this principle to the mathematical formulation S = k log W (explained below) depicting the logarithmic connection between a particular system's entropy or disorder, and the probability of the number of ways the systems atoms can be assembled.

Building on Maxwell's idea of statistical averages, Boltzmann started off with the assumption that a system will evolve from a less probable state to a more probable state when agitated by heat or mechanical vibration until thermal vibration is reached. At equilibrium, the system will be in its most probable state when the entropy is a maximum. It is impossible to calculate the motion of billions and billions of particles, but the probability method can give direct answers for the most probable state. [9.1]

There is even a small probability that all the molecules of a system of confined gas might appear for an instant in just one corner of the container. This possibility, called an energy fluctuation, must exist if the probabilistic interpretation of entropy is to be allowed. [9.2]

Here, Boltzmann provided the link between our microscopic and macroscopic understandings of the world. S is the entropy of a system and signifies how disordered the system is. This is its macroscopic feature. W tells us about the number of its different microscopic states, and k is a constant Boltzmann derived associating the two. The logarithm (log) of a number is the index or power by which another fixed value, the base, has to be raised to produce that number, and obviates the need for interminable calculations. Entropy being a quantity that measures the disorder of a system, the Boltzmann formula presents a mathematical formulation of entropy by looking at all the possible states that the system can occupy.

Each one of these states will occur with a certain probability that can be inferred from experiments or other principles. The logarithm of these probabilities is then taken and the total entropy of the system is then a direct function of this and tells us its degree of disorder[10]. This work made Boltzmann the creator of statistical mechanics, a method in which the properties of macroscopic bodies are predicted by the statistical behaviour of their constituent microscopic parts. These new ideas – using probabilities and statistics of microscopic systems to predict the macroscopic properties which can be measured in the laboratory (like temperature, pressure, etc.) – underlie everything that was to come in quantum theory. [11]

[1] The path to formulation of the First and Second Laws are considered in Michael Guillen, “An Unprofitable Experience – Rudolf Clausius and the Second Law of Thermodynamics”, Five Equations that changed the world – The Power and Poetry of Mathematics, Hyperion, New York, 1995, 165ff; Robert P Crease, “The Scientific Equivalent of Shakespeare: The Second Law of Thermodynamics”, Chapter 5 of The Great Equations – Breakthroughs in Science from Pythagoras to Heisenberg, Norton, New York, 2008, 111 ff; Brian Greene, The Fabric of the Cosmos – Space, Time and the Texture of Reality, Penguin, London, 2004, 151-176.

[2] Bill Bryson, A Short History of Nearly Everything, Broadway Books, 20103, 77.

[3] As enunciated by Rudolf Clausius, cited in Crease, op cit, 111.

[4] “Laws of Thermodynamics”, Adam Hart-Davis (ed), Science- The Definitive Visual Guide, DK, 2009, 180-1.

[5] Vlatko Vedral applies the same principles to information theory: Vlatko Vedral, Decoding Reality, op cit, Ch 5, pp 58-59.

[6] Ibid.

[7] Guillen, op cit, 206.

[8] Ibid.

[8.1] Michael Shermer, quoting theologian William Lane Craig, "Alvy's error and the meaning of life", Scientific American, February 2018. 61.

[9] Guillen, op cit, 208.

[9.1] JP McEvoy, Introducing Quantum Theory: A Graphic Guide, Icon Books Ltd. Kindle Edition, locations 263-287.

[9.2] Ibid.

[10] See Vlatko Vedral, Decoding Reality, op cit, 61,62.

[11] McEvoy, op cit.

Boltzmann was arguing that the second law was simply the result of the fact that in a world of mechanically colliding particles, disordered states are the most probable. Consider gas molecules where each collision results in increasingly disordered velocity distributions eventually reaching an equilibrium resulting in maximum microscopic disorder or in other words a state of maximum entropy. Because there are so many more possible disordered states than ordered ones, a system will almost always be found in the state of maximum disorder.

We've mentioned entropy a few times, but what is the key to understanding the processes involved? In General Chemistry, Darrell Ebbing utilises the example of a deck of cards "fresh out of the box, arranged by suit and in sequence from ace to king". At this point, the deck is in its ordered state. "Shuffle the cards and you put them in a disordered state. Entropy is a way of measuring just how ordered that state is and of determining the likelihood of particular outcomes with further shuffles."[1]

Billions of years ago, when a hot nearly uniform gaseous mixture of hydrogen and helium coalesced into a set of conditions which produced the so-called “big bang”, the universe began in a state of low entropy (high order), but ever since the entropy of the universe has been getting higher and higher and the overall net amount of disorder gradually increasing, becoming more and more chaotic and disorganized[2]. The Second Law dictates that all changes in nature proceed in the one direction, and Boltzmann’s equation, since etched into his tombstone, reveals that the universe is in the process of slowly winding down, slowly relaxing and slowly becoming more chaotic[3].

Second law scepticism

The second law is generally considered to be, and described as, “one of the most fundamental laws in science”[4]. However, not everyone agrees and some now pour cold water on the tendency towards increasing entropy and heat death scenarios (no pun intended), arguing instead that the universe is characterised by increasing order and increasing information. The British-born American theoretical physicist and mathematician, Freeman Dyson, famous for his work in quantum electrodynamics, solid-state physics, astronomy and nuclear engineering, says that the evolution of life is a part of the evolution of the universe, which evolves with increasing amounts of information embodied in ordered structures, galaxies and stars and planetary systems; and that in the living and in the nonliving world, we see a growth of order, starting from the featureless and uniform gas of the early universe and producing the magnificent diversity of weird objects that we see in the sky and in the rain forest[5].

Dyson says that thanks to the discoveries of astronomers in the twentieth century, we now know that heat death is a myth[6]. The idea of heat death was based on an idea that he refers to as the cooking rule, which states that “a piece of steak gets warmer when we put it on a hot grill. More generally, the rule says that any object gets warmer when it gains energy, and gets cooler when it loses energy. Humans have been cooking steaks for thousands of years, and nobody ever saw a steak get colder while cooking on a fire. The cooking rule is true for objects small enough for us to handle. If the cooking rule is always true, then the argument for the heat death is correct”.

However, the cooking rule is not true for objects of astronomical size for which gravitation is the dominant form of energy, he says. Take the sun! As the sun loses energy by radiation, it becomes hotter and not cooler. Since the sun is made of compressible gas squeezed by its own gravitation, loss of energy causes it to become smaller and denser, and the compression causes it to become hotter. For almost all astronomical objects, gravitation dominates, and they have the same unexpected behavior. Gravitation reverses the usual relation between energy and temperature. In the domain of astronomy, when heat flows from hotter to cooler objects, the hot objects get hotter and the cool objects get cooler. As a result, temperature differences in the astronomical universe tend to increase rather than decrease as time goes on. So, there is no final state of uniform temperature, and there is no heat death. Gravitation gives us a universe hospitable to life. Information and order can continue to grow for billions of years in the future, as they have evidently grown in the past.

This then leads one to inquire, what exactly is the information capacity of the universe, considered as a hard drive? Huge, said Seth Lloyd, a professor of quantum computing at the Massachusetts Institute of Technology, in 2002[7]. Something like 1090 bits. Using the same technique as Lloyd, Cesar Hidalgo in another thought experiment estimates the storage capacity of the Earth as something like 1044 bits, of which only a small fraction has been used. Why so? Because of the Second Law of Thermodynamics. Entropy reigns, making order disappear. “Entropy rich systems can exist only as long as they “sweat” entropy at their seams, paying for their high levels of organisation by expunging heat”[8]. ‘Entropy is the price of structure’[9].

But order does exist in our universe, says Hidalgo[10], thanks mainly to three things: the flow of energy, the materialisation of solids and the capacity of matter, such as trees for example, to compute, and despite the forces arrayed against the emergence of order, information gradually grows. However, although humans are partly responsible for the growth of information on earth, we are creating a surprisingly small amount of information, the author concludes, largely because our capacity to do so is limited because our ability to create networks of people, which may otherwise have assisted in the process, is constrained in part by historical, institutional and technological factors.

[1] Cited by Bill Bryson, A short History of Nearly Everything, Broadway Books, 2003, 116.

[2] Greene, (2004) op cit, 173. Roger Penrose says that inflationary theory doesn’t explain why there was such low entropy in the first place. He suggests that ultimately black holes will consume all the matter in the universe. Finally the black holes will evaporate leaving a universe with nothing but energy and a state of low entropy (the state in which it began), bringing this period to an end and triggering the next time period with another big bang: “Will that be one bang or two”, Night Sky, Vol 306, December 2010.

[3] Guillen, op cit, 212; Crease, op cit, 125.

[4] Vedral, op cit, 59.

[5] Review of James Gleick’s The Information: A History, a Theory, a Flood by James Gleick in the New York Review of Books, 10 March 2011.

[6] Citing the chapter, “How Order Was Born of Chaos,” in the book Creation of the Universe, World Scientific Publishing Co., Singapore, 1989, by Fang Lizhi, leading Chinese astronomer and political dissident now at the University of Arizona, and his wife Li Shuxian.

[7] Cited in César A Hidalgo, “Planet Hard Drive”, Scientific American, August 2015, 63-65.

[8] Ibid.

[9] Attributed to Ilya Prigogine, Nobel Prize winning chemist.

[10] Op cit at 65.

Thermodynamics is the study of heat and energy, and originated from efforts to understand and improve the steam engine in the early nineteenth century. In fact, the whole of the second law is framed in terms of the relationship between work, heat and heat loss evident in the functioning of such things as steam trains, steam ships, machinery and other engineering masterpieces permeating the industrial age. However, the principles involved also provide a deep insight into the manner in which the universe works as a whole. "In the briefest terms, the second law states that a little energy is always wasted. You can't have a perpetual motion device, because no matter how efficient, it will always lose energy and eventually run down. The first law says that you can't create energy and the third that you can't reduce temperatures to absolute zero: there will always be some residual warmth".[2] Only the first and second are considered here. The effects of all three laws in tandem have been pithily expressed in the aphorism “The energy of the world is constant; the entropy of the world strives to a maximum”[3].

According to the First Law of Thermodynamics (otherwise known as the Law of Energy Conservation) formulated by Rudolf Clausius (1822-1888), a unit of any one kind of energy can be changed into a unit of any other kind, without affecting the overall energy of the universe. This occurs in a so-called isolated or ideal system without any external exchange, in which neither matter nor energy can enter or exit but can only move around inside, and all processes are mechanical and reversible. It is a hypothetical system whose total energy-mass remains constant. According to this system, energy cannot be created or destroyed, only transformed from one variety to another. The principle reduces to the formula: ΔΕ universe = 0 where the net change (Δ) in the total energy of the universe (Ε universe) always equals zero, because the total energy of the universe is an eternal constant.

The Second Law of Thermodynamics, also known as the Law of Energy Conversion or Non-Conservation was also formulated in its initial form by Clausius. It is concerned with temperature and energy change and their irreversible propensities, heat always flowing from hot to cold, never vice-versa. It also involves the manner in which friction changes mechanical movement into heat, never the reverse. Clausius concluded that these were but two varieties of the same thing, a state of affairs which he described as entropy: the unusable energy in the form of heat which is lost in the production process[4].

The Second Law says that in physical terms, a system reaches its death when it reaches its maximum disorder when energy in the form of heat stops flowing (thermal death), or when it contains as much information as it can handle (information overload), and this state of maximum disorder is reached when life is deprived of its capacity to evolve so that it effectively becomes part of the lifeless universe[5]. These principles apply to all physical systems, including life itself, which must ultimately perish[6].

The principle involved is that all machines inevitably lose heat in the production process which is unusable. Take a steam engine. “The natural changes in such engines (heat escaping wastefully from hot boiler to cool radiator and work being changed wastefully into heat by friction) always exceed(s) the one and only unnatural change (heat being changed into work by pistons)”. So when the engine cools down, the heat energy still exists but it can no longer do any work since it has dissipated into the air. The result is a net increase in the entropy of the universe[7]. Similar principles apply to the heat changes wrought by other machines such as our own bodies and the life cycle of stars. So the positive and negative changes occurring in all real-life engines of the universe always combined so as to increase entropy – “Always!”[8]

In terms of the life cycle of the universe, considered on a global scale, this means that "everything is ultimately destined to destruction in the heat death of the universe. As the universe expands, it grows colder and colder as its energy is used up. Eventually all the stars will burn out, all matter will collapse into dead stars and black holes, there will be no life, no heat, no light—only the corpses of dead stars and galaxies expanding into endless darkness".[8.1]

Expressed mathematically, ΔS universe > 0, that is, the net change in the total entropy of the universe always exceeds zero, and the positive entropy (natural) changes always exceed the negative (unnatural) ones. Heat always flows from the hot areas to the cold areas, ultimately resulting in a lukewarm universe where heat does not flow at all until all temperatures ultimately become equal. . In the fullness of time, the universe will become a bland nothingness devoid of heat flow[9]. Life needs temperature differences, to avoid being stifled by its waste heat. So life will disappear.

In two papers published in 1872 and 1877, Ludwig Boltzmann (1844-1906) reduced this principle to the mathematical formulation S = k log W (explained below) depicting the logarithmic connection between a particular system's entropy or disorder, and the probability of the number of ways the systems atoms can be assembled.

Building on Maxwell's idea of statistical averages, Boltzmann started off with the assumption that a system will evolve from a less probable state to a more probable state when agitated by heat or mechanical vibration until thermal vibration is reached. At equilibrium, the system will be in its most probable state when the entropy is a maximum. It is impossible to calculate the motion of billions and billions of particles, but the probability method can give direct answers for the most probable state. [9.1]

There is even a small probability that all the molecules of a system of confined gas might appear for an instant in just one corner of the container. This possibility, called an energy fluctuation, must exist if the probabilistic interpretation of entropy is to be allowed. [9.2]

Here, Boltzmann provided the link between our microscopic and macroscopic understandings of the world. S is the entropy of a system and signifies how disordered the system is. This is its macroscopic feature. W tells us about the number of its different microscopic states, and k is a constant Boltzmann derived associating the two. The logarithm (log) of a number is the index or power by which another fixed value, the base, has to be raised to produce that number, and obviates the need for interminable calculations. Entropy being a quantity that measures the disorder of a system, the Boltzmann formula presents a mathematical formulation of entropy by looking at all the possible states that the system can occupy.

Each one of these states will occur with a certain probability that can be inferred from experiments or other principles. The logarithm of these probabilities is then taken and the total entropy of the system is then a direct function of this and tells us its degree of disorder[10]. This work made Boltzmann the creator of statistical mechanics, a method in which the properties of macroscopic bodies are predicted by the statistical behaviour of their constituent microscopic parts. These new ideas – using probabilities and statistics of microscopic systems to predict the macroscopic properties which can be measured in the laboratory (like temperature, pressure, etc.) – underlie everything that was to come in quantum theory. [11]

[1] The path to formulation of the First and Second Laws are considered in Michael Guillen, “An Unprofitable Experience – Rudolf Clausius and the Second Law of Thermodynamics”, Five Equations that changed the world – The Power and Poetry of Mathematics, Hyperion, New York, 1995, 165ff; Robert P Crease, “The Scientific Equivalent of Shakespeare: The Second Law of Thermodynamics”, Chapter 5 of The Great Equations – Breakthroughs in Science from Pythagoras to Heisenberg, Norton, New York, 2008, 111 ff; Brian Greene, The Fabric of the Cosmos – Space, Time and the Texture of Reality, Penguin, London, 2004, 151-176.

[2] Bill Bryson, A Short History of Nearly Everything, Broadway Books, 20103, 77.

[3] As enunciated by Rudolf Clausius, cited in Crease, op cit, 111.

[4] “Laws of Thermodynamics”, Adam Hart-Davis (ed), Science- The Definitive Visual Guide, DK, 2009, 180-1.

[5] Vlatko Vedral applies the same principles to information theory: Vlatko Vedral, Decoding Reality, op cit, Ch 5, pp 58-59.

[6] Ibid.

[7] Guillen, op cit, 206.

[8] Ibid.

[8.1] Michael Shermer, quoting theologian William Lane Craig, "Alvy's error and the meaning of life", Scientific American, February 2018. 61.

[9] Guillen, op cit, 208.

[9.1] JP McEvoy, Introducing Quantum Theory: A Graphic Guide, Icon Books Ltd. Kindle Edition, locations 263-287.

[9.2] Ibid.

[10] See Vlatko Vedral, Decoding Reality, op cit, 61,62.

[11] McEvoy, op cit.

Boltzmann was arguing that the second law was simply the result of the fact that in a world of mechanically colliding particles, disordered states are the most probable. Consider gas molecules where each collision results in increasingly disordered velocity distributions eventually reaching an equilibrium resulting in maximum microscopic disorder or in other words a state of maximum entropy. Because there are so many more possible disordered states than ordered ones, a system will almost always be found in the state of maximum disorder.

We've mentioned entropy a few times, but what is the key to understanding the processes involved? In General Chemistry, Darrell Ebbing utilises the example of a deck of cards "fresh out of the box, arranged by suit and in sequence from ace to king". At this point, the deck is in its ordered state. "Shuffle the cards and you put them in a disordered state. Entropy is a way of measuring just how ordered that state is and of determining the likelihood of particular outcomes with further shuffles."[1]

Billions of years ago, when a hot nearly uniform gaseous mixture of hydrogen and helium coalesced into a set of conditions which produced the so-called “big bang”, the universe began in a state of low entropy (high order), but ever since the entropy of the universe has been getting higher and higher and the overall net amount of disorder gradually increasing, becoming more and more chaotic and disorganized[2]. The Second Law dictates that all changes in nature proceed in the one direction, and Boltzmann’s equation, since etched into his tombstone, reveals that the universe is in the process of slowly winding down, slowly relaxing and slowly becoming more chaotic[3].

Second law scepticism

The second law is generally considered to be, and described as, “one of the most fundamental laws in science”[4]. However, not everyone agrees and some now pour cold water on the tendency towards increasing entropy and heat death scenarios (no pun intended), arguing instead that the universe is characterised by increasing order and increasing information. The British-born American theoretical physicist and mathematician, Freeman Dyson, famous for his work in quantum electrodynamics, solid-state physics, astronomy and nuclear engineering, says that the evolution of life is a part of the evolution of the universe, which evolves with increasing amounts of information embodied in ordered structures, galaxies and stars and planetary systems; and that in the living and in the nonliving world, we see a growth of order, starting from the featureless and uniform gas of the early universe and producing the magnificent diversity of weird objects that we see in the sky and in the rain forest[5].

Dyson says that thanks to the discoveries of astronomers in the twentieth century, we now know that heat death is a myth[6]. The idea of heat death was based on an idea that he refers to as the cooking rule, which states that “a piece of steak gets warmer when we put it on a hot grill. More generally, the rule says that any object gets warmer when it gains energy, and gets cooler when it loses energy. Humans have been cooking steaks for thousands of years, and nobody ever saw a steak get colder while cooking on a fire. The cooking rule is true for objects small enough for us to handle. If the cooking rule is always true, then the argument for the heat death is correct”.

However, the cooking rule is not true for objects of astronomical size for which gravitation is the dominant form of energy, he says. Take the sun! As the sun loses energy by radiation, it becomes hotter and not cooler. Since the sun is made of compressible gas squeezed by its own gravitation, loss of energy causes it to become smaller and denser, and the compression causes it to become hotter. For almost all astronomical objects, gravitation dominates, and they have the same unexpected behavior. Gravitation reverses the usual relation between energy and temperature. In the domain of astronomy, when heat flows from hotter to cooler objects, the hot objects get hotter and the cool objects get cooler. As a result, temperature differences in the astronomical universe tend to increase rather than decrease as time goes on. So, there is no final state of uniform temperature, and there is no heat death. Gravitation gives us a universe hospitable to life. Information and order can continue to grow for billions of years in the future, as they have evidently grown in the past.

This then leads one to inquire, what exactly is the information capacity of the universe, considered as a hard drive? Huge, said Seth Lloyd, a professor of quantum computing at the Massachusetts Institute of Technology, in 2002[7]. Something like 1090 bits. Using the same technique as Lloyd, Cesar Hidalgo in another thought experiment estimates the storage capacity of the Earth as something like 1044 bits, of which only a small fraction has been used. Why so? Because of the Second Law of Thermodynamics. Entropy reigns, making order disappear. “Entropy rich systems can exist only as long as they “sweat” entropy at their seams, paying for their high levels of organisation by expunging heat”[8]. ‘Entropy is the price of structure’[9].

But order does exist in our universe, says Hidalgo[10], thanks mainly to three things: the flow of energy, the materialisation of solids and the capacity of matter, such as trees for example, to compute, and despite the forces arrayed against the emergence of order, information gradually grows. However, although humans are partly responsible for the growth of information on earth, we are creating a surprisingly small amount of information, the author concludes, largely because our capacity to do so is limited because our ability to create networks of people, which may otherwise have assisted in the process, is constrained in part by historical, institutional and technological factors.

[1] Cited by Bill Bryson, A short History of Nearly Everything, Broadway Books, 2003, 116.

[2] Greene, (2004) op cit, 173. Roger Penrose says that inflationary theory doesn’t explain why there was such low entropy in the first place. He suggests that ultimately black holes will consume all the matter in the universe. Finally the black holes will evaporate leaving a universe with nothing but energy and a state of low entropy (the state in which it began), bringing this period to an end and triggering the next time period with another big bang: “Will that be one bang or two”, Night Sky, Vol 306, December 2010.

[3] Guillen, op cit, 212; Crease, op cit, 125.

[4] Vedral, op cit, 59.

[5] Review of James Gleick’s The Information: A History, a Theory, a Flood by James Gleick in the New York Review of Books, 10 March 2011.

[6] Citing the chapter, “How Order Was Born of Chaos,” in the book Creation of the Universe, World Scientific Publishing Co., Singapore, 1989, by Fang Lizhi, leading Chinese astronomer and political dissident now at the University of Arizona, and his wife Li Shuxian.

[7] Cited in César A Hidalgo, “Planet Hard Drive”, Scientific American, August 2015, 63-65.

[8] Ibid.

[9] Attributed to Ilya Prigogine, Nobel Prize winning chemist.

[10] Op cit at 65.